One of the drivers behind technology development is the quest for human equivalence – the point where technology performs at a level of functioning that is equal to or greater than the functioning of the human brain. While it is speculative at best to estimate if and when such a goal is achieved, recent history illustrates that the increase in capability and capacity of technology is ramping up a rather steep slope. And if we are to trust the application of Moore’s law, technology’s prowess is doubling every 18-24 months. At that rate, it doesn’t take much to project a future wherein technology is closing in on human equivalence.

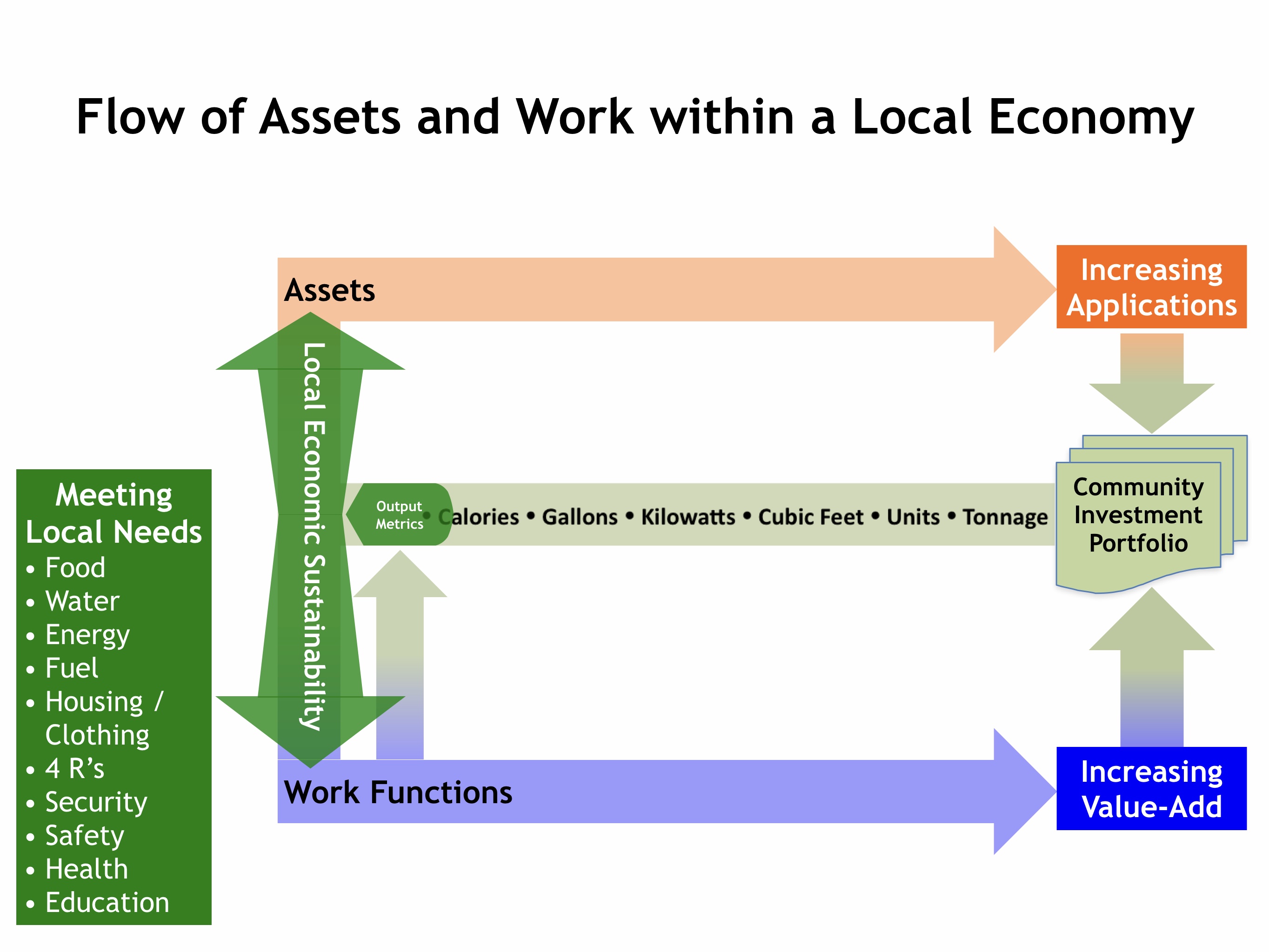

As a trend develops it is useful to be able to track its progress and anticipate its trajectory. Choosing or crafting a set of markers that give indication of a trend’s speed, depth, and scope as it gains influence and becomes an impetus for change is critical. While there are many markers from which to choose, the most durable and universally applicable sets concerns value added, particularly, where and how value is added.

The simple Wikipedia example about making miso soup from the above link is a good one to illustrate how advances in technology change the value-added equation. First, the value of the soup as the end product is comprised of the value added by the farmer to grow the raw product, soy beans, plus the value added by the processor to the soy beans to produce tofu, plus the value added by the chef to the tofu to prepare the soup. This “value package” utilizes a combination of equipment, input, labor, and know-how applied in various locations, stages, and timeframes—and is based on a specific capability and capacity level of technology.

What happens when technology develops further? There are several possibilities: the soy beans are grown in close proximity to the preparer; the yield of soy bean plants and desired quality and characteristics of the beans are increased; the equipment that harvests soy beans conducts post-harvest operations that condition the beans for making tofu; this equipment is smaller and more compact which accommodates localized production; methods of packaging, storing, and shipping soy beans or tofu are more integrated thereby consuming less energy and taking less time. In these instances, advances in technology are applied to the value-added equation dramatically altering the value package. The result is a system utilizing less costly and more productive equipment, requiring fewer inputs and less labor, and deeply embedding human knowledge and experience into new processes and tools. This has the potential to be transformational—and in relatively short order, too!

While the example of soy may represent a somewhat narrow space within which profound change can be noted, it does highlight where and how value added steps are enabled by technology. These changes can be witnessed in a broader sense through the lens of large social and economic “eras.” The first of these, industrialization , brought developments in technology to bear on centralizing facilities, equipment, and people in the production process where capital investments could be amortized through economies of scale.

As production technologies become scalable, logistics are more integrated and efficient, and information and communication technologies are more pervasive, powerful, and responsive, manufacturing operations are dispersed close to those areas where lower cost skilled or tractable labor is available. This is the impetus for “globalization.” Attendant to the distribution of manufacturing capability is the transfer of technology and subject matter expertise. This significantly increases the technological competence of the lower cost workforce. In this regard, globalization heightens the ability of people to utilize new technologies when presented and results in a more evenly distributed capability worldwide.

This puts us on the brink of the next era: localization. The embedded link goes to one of my earlier postings about this phenomenon, so I will not wax on about it again here. However, one quick observation: localization is the inevitable outcome of technology continuing to cost less, get stronger, fit into smaller spaces, run faster, be embedded in more operations, streamline processes, and sense, respond, adapt, learn, and sustain itself despite problems and challenges. To put such an imperative into perspective, the more we transfer technology from one place to another under the auspices of globalization, the more potential we are placing in the hands of the recipients to utilize those technologies in developing localized applications. Constant application of technology that packs more punch at lower cost is what SUSTAINS the drive toward localization. Without technology localization would merely be an updated term for the back-to-the-land movement of some 40 years ago. While localization may imply a different lifestyle choice, it is actually honoring well-deserved quality of life factors while continuing to take advantage of what an improved standard of living provides.

What happens beyond localization as technology continues its trek to become smaller, faster, stronger, etc.? Imagine assembling the end product from molecules – at the point of utilization – precisely at the time it is needed? Yes. Get small enough and one is into the basic building blocks of material: molecules. This is the realm of nanotechnology, specifically, molecular manufacturing.

While such a concept has the earmarks of science fiction or the paranormal and, indeed, there are many who contend it is one or both, technology will continue to shrink the distance from production to utilization until they are as close to the same as possible and the material manifestation will be of the immediacy and convenience of what is conceived virtually. The development timeline for molecular manufacturing suggests a useful output rests some distance in the future and that it will come at considerable expense.

This time is needed. Eric Drexler, one of the leading thinkers in the field of nanotechnology, co-founder of the Foresight Institute, and currently, Chief Technical Advisor for Nanorex, Inc. is a clear advocate for “responsible nanotechnology.” Citing the hypothetical possibility of the world turning into “gray goo” should molecular nanotechnology run amok, Drexler advises the imposition of a stringent ethical framework on these technologies before they are endowed with the capability of self-replication. Not bad counsel regardless whether one buys into Drexler’s future vision for nanotechnology.

And maybe that’s the reason we need to spend time in localization before leaping ahead to what’s next. It is the strength of the community experience where we learn to act upon our value as society rather than default to the strength and survival of the fittest individuals. This is the intent behind the Nanoethics Group. As an extract from their mission states, “By proactively opening a dialogue about the possible misuses and unintended consequences of nanotechnology, the industry can avoid the mistakes that others have made repeatedly in business, most recently in the biotech sector – ignoring the issues, reacting too late and losing the critical battle of public opinion.”

Yes. One can only imagine what happens if the machine – nanotechnology, in this instance – has the unfettered capacity to choose who survives with no more ethical framework in place to guide it than the ones we humans use today…maybe we are not quite ready for human equivalence!

Originally posted to New Media Explorer by Steve Bosserman on Tuesday, July 10, 2007